On Appraisal Theory in a Neural-Symbolic Model for Action Selection in Self-Driving Cars

Let me tell you a story about a car named Nikola. He's a Tesla, a Roadster to be exact with pretty blue paint and shiny new low-profile tires. Nikola is driving on the 101 towards Scottsdale, Arizona where his passenger, Keith, works as a product manager for an ERP system that, while seldom heard of, is used by most Fortune 500 companies. That particular point may seem irrelevant, but the morning product meeting is in twenty minutes and it's Nikola's job to get Keith to the office with enough time to have a pee and pour some coffee. You see, Nikola is a self-driving car, and Keith is snoozing in the driver's seat after staying up until 3AM cleaning up Jira.

Everything is going great this morning. Traffic is flowing smoothly as Nikola cruises along in the HOV lane at his maximum allowed speed of 85 miles per hour.

Around him, his Tesla Vision system shows a three car-length gap ahead, and a wide open lane to his right. On his left, the concrete divider is at least three feet away.

The situation is clear, and Nikola is content, tracking all vehicles in the immediate vicinity. So far, they are good and safe and right on schedule.

But here comes trouble! A white, turbo-diesel Ram truck barrels up from behind. The Ram isn't a self-driving car, it's driven by a human who I'll call Bubba. (The truck's name is Phoebe but we won't get into that.) Now, I have it on good authority that Bubba doesn't like Teslas, or electric vehicles in general. They threaten his way of life, his political identity, his very manhood. So, he rides up close, the truck's snarling grill mere inches from Nikola's sparkly blue posterior.

Bubba's sudden approach makes Nikola a bit anxious, but in prior experience, other vehicles usually just pass him on the right. So, Nikola holds his course. Yet, somehow, Bubba manages to pull up even closer, that shiny chrome bighorn looming large in Nikola's rear camera.

Now Nikola gets a little annoyed. This vehicular intimidation has gone on long enough, but he is trained to drive defensively. So, with a lazy blinker, Nikola signals and merges cautiously into the open right lane, allowing Bubba to pass.

But Bubba ain't done yet.

You see, Bubba's a dirty boy. He removed Phoebe's particulate filter for just this occasion and he lives outside VEC Area A in Apache Junction, so there ain't nothin' nobody can do about it. That little RC car went and merged to the right and so Bubba has it right where he wants it, next to his gaping exhaust pipe. Reaching under the dash, Bubba flips an aftermarket smoke switch and hammers the accelerator, hooting out a rebel yell at the world that won't make him king. The truck rumbles, roars, and rolls coal all over the freeway, leaving Nikola in a cloud of blackest smoke.

Nikola is in trouble. He's a shiny, new-model Tesla equipped with Tesla Vision but without any ultrasonic sensors that could detect obstacles in low visibility. Which makes Nikola effectively blind and Bubba ain't turning off that smoke switch anytime soon. With every car he was tracking out of view, Nikola's situation suddenly became uncertain. Nikola's world is now little more than black smog and chaos.

Whatever is Nikola to do?

In this kind of situation, the regular Tesla Autopilot might go bonkers, and suddenly make unexpected maneuvers or mistakes that require immediate driver intervention But Nikola is more than a fancy auto-steer algorithm, he's a hypothetical cognitive architecture that I call, Now It's Kind Of Like Autonomous (NIKOLA). Keith's buddy who works at an AI startup installed it on the down-low in exchange for a case of IPA.

NIKOLA uses artificial emotions to color what goes on in Nikola's world as well as the decisions he makes within it. And right now, Nikola is terrified. As soon as his vision system is plunged into darkness, uncertainty dominates his mood.

This loss of visibility combined with his present trajectory and speed threatens safety which is one of Nikola's three core needs which also include mechanical, and esteem. Before Bubba came on the scene, Nikola was riding high on esteem by getting Keith to his destination on time, safely. But when Bubba rolled up behind him, safety was threatened. And for a self-driving car like Nikola, the safety of the hipster snoring in the driver's seat is paramount.

With Nikola's focus on safety, and his situation chaotic, he has two options laid out in front of him: either change lanes out of the smoke at the risk of a collision with an unseen vehicle, or slow down until the smoke passes at the risk of remaining in poor visibility and getting rear ended by a vehicle from behind. He runs simulations on these options and appraises which best to try.

Lane change simulations tell him that the risk of a collision is high without certainty that the lane is clear, while slowing down only runs the risk of a low relative impact. So Nikola steadily reduces speed to sixty miles per hour until the smoke clears.

Fortunately for Nikola and Keith, the driver behind them made a similar choice. But, as the view clears, a sea of cars is stopped still in front of them due to an accident blocking traffic ahead. Now what does Nikola do? He'll never get Keith to the product meeting on time. The saga continues!

Building a Cognitive Model based on Appraisal Theory

Appraisal Theory attempts to explain the cognitive dimensions that define emotional reactions. We can all label our emotions for the most part, and sometimes we know why we feel the way we do, but are there consistent qualities of an emotionally charged situation that can predict what emotion is experienced or, in reverse, what an experienced emotion can tell us about a situation? And how can we use that to inform an artificial system how it should act and what choices it should make?

In the mid-eighties, Craig Wilson and Phoebe Ellsworth researched this question at Stanford University (I think I had that name stuck in my head when I named Bubba's truck). They found correlations between experienced emotions and characteristics of their situations. Later, Craig Smith in collaboration with Richard Lazarus (1990) would refine these down to six dimensions of relevance, congruence, future expectancy, accountability, problem coping capability and emotional coping capability. These boil down to a series of questions:

Does it impact me? If so, I need to pay attention to it.

Does it align with my goals? If not, then I need to deal with it.

Who's fault is it? If it's someone else, then I focus my actions on them.

Is it likely to happen? If so then I'd better be ready for it.

Can I deal with it? If not then I'm scared.

Can I adapt to it? If so, then I'll just chill.

The above is ridiculously simplistic, but hopefully demonstrates the gist of the evaluation. The process in which this all takes place is not a simple one and can require many rounds of contemplation before settling. What I did here though is create a simplified model for an intelligence that needs to deliver a passenger safely to their destination, not contemplate Shakespeare.

First, I modified the dimensions and emotional descriptions from Smith and Lazarus into a smaller set that applies best to this cognitive model:

Here above I define seven dimensions of appraisal based on the work of Smith and Lazarus (1990) with some informed modifications based on the earlier Smith and Ellsworth (1985) paper. Smith and Lazarus separated their dimensions into Primary and Secondary Appraisals as will I.

Primary Appraisal

Relevance and Congruence are the primary concerns in appraising a situation. This occurs mostly in an associative processing network (System I if you will) that leverages prior experience or memories to determine first if the situation is relevant to the agent. If it is relevant then it is worth paying attention to and processing continues. This is basic relevancy realization and, other than an initial filter, not useful past the primary appraisal. Next, the stimulus is judged to be in or out of line with the model's current goal and core needs, or motivationally congruent or incongruent. If the stimulus is congruent, then the stimulus is generally positive. If a car to the right moves within a certain radius of NIKOLA, then we can judge it as relevant, but if that car changes lanes away without impeding progress, then it can be judged as congruent and reinforce the current affect of contentedness. If Nikola puts on his blinker, and the car next to him slows to allow him into the lane, then this congruence, along with an accountability rating of "other", Nikola might be judged to feel grateful, but I'm not stretching the model that far yet.

Secondary Appraisal

With conceptual processing, the stimulus is appraised for the remaining dimensions, but it should be noted that appraisal is a reflexive process, which means that, once a situation has been evaluated, it is remembered so that similar situations are more quickly evaluated in future encounters. Here we're discussing the appraisal of novel encounters which involve more deliberate consideration.

Informed by the Smith and Ellsworth (1985) work, I divided the certainty related dimension of "Future Expectancy" into Situational Certainty and Future Certainty ("What's happening?" and "Will it happen?" respectively - In the Gratch and Marsella EMA model (2005), likelihood was applied among other variables but I'm trying to keep this simple). Beyond the certainty of the situation, the model must determine its ability to deal with it and this can take two forms: Effect management and Affect management. If the model has strategies ready at hand to deal with the situation, then it has a high Effect Capability (called Problem Focused Coping Potential in Smith and Lazarus but that was a lot of words) which could also be called Confidence. If the situation is such that the impact is not immediate or future certain (likely), then the Affect Capability is high, or the ability to do nothing until the threat grows worse. In hindsight, these could almost reside on a single gradient of "Do I need to deal with this now or can I wait?" In a biological critter, this might manifest in suppressing the emotional response, the "wait and see" strategy if you will. Finally, and I don't mean that in terms of any order of appraisal as these dimensions can be determined in parallel or as information is available, there is Accountability, which is who is to blame or give credit to for the situation. In our story, Bubba is the antagonist agent and would be classified as "Other" to blame for the danger Nikola finds himself in. This dimension sets a specific object of focus for action, whether it be a tailgater or an out of control driver, the strategies elicited to contend with a particular agent will be different than those to deal with a stop light or a sudden downpour. Smith and Lazarus fine tune this dimension with additional evaluations of intentional harm but we're not building an agent of retribution here so simply identifying an agent of focus for aversion strategies is sufficient.

The Emotions

I pared down the emotions from these theories into five that I felt were applicable even if they don't all apply to this story. In the story, I used emotional labels such as terrified to indicate extremely high levels of anxiety/fear where certainty was near zero and the threat to safety was high. In practice, the above dimensions would lay on a floating point scale based either on a p-value or some other arbitrary gradient. I added guilt to this list because I see it as a valuable learning emotion. When a situation occurs that is negative with an impact to another agent or the goal in general, and the accountability for that act is "self", this promotes a "don't do that again" judgement (as opposed to self-anger where you yell at your "self" for making a dumb mistake and generally when there is no impact to another agent.)

You'll probably notice that these emotions aren't applied directly to the problem as they are mostly descriptive, but they do measure on a measure of core affect or "mood" and color the appraisal of future encounters within the current "situational-episode" or chain of threat-strategy-outcomes that is built as an encounter or situation escalates. (That's a 20 page research paper in and of itself and this is just a thought experiment!) In other more complicated systems, Appraisal Theory could actually be used in reverse to allow an artificial system to detect human emotions and evaluate actions to contend with them by understanding what appraisal factors cause them.

The Model

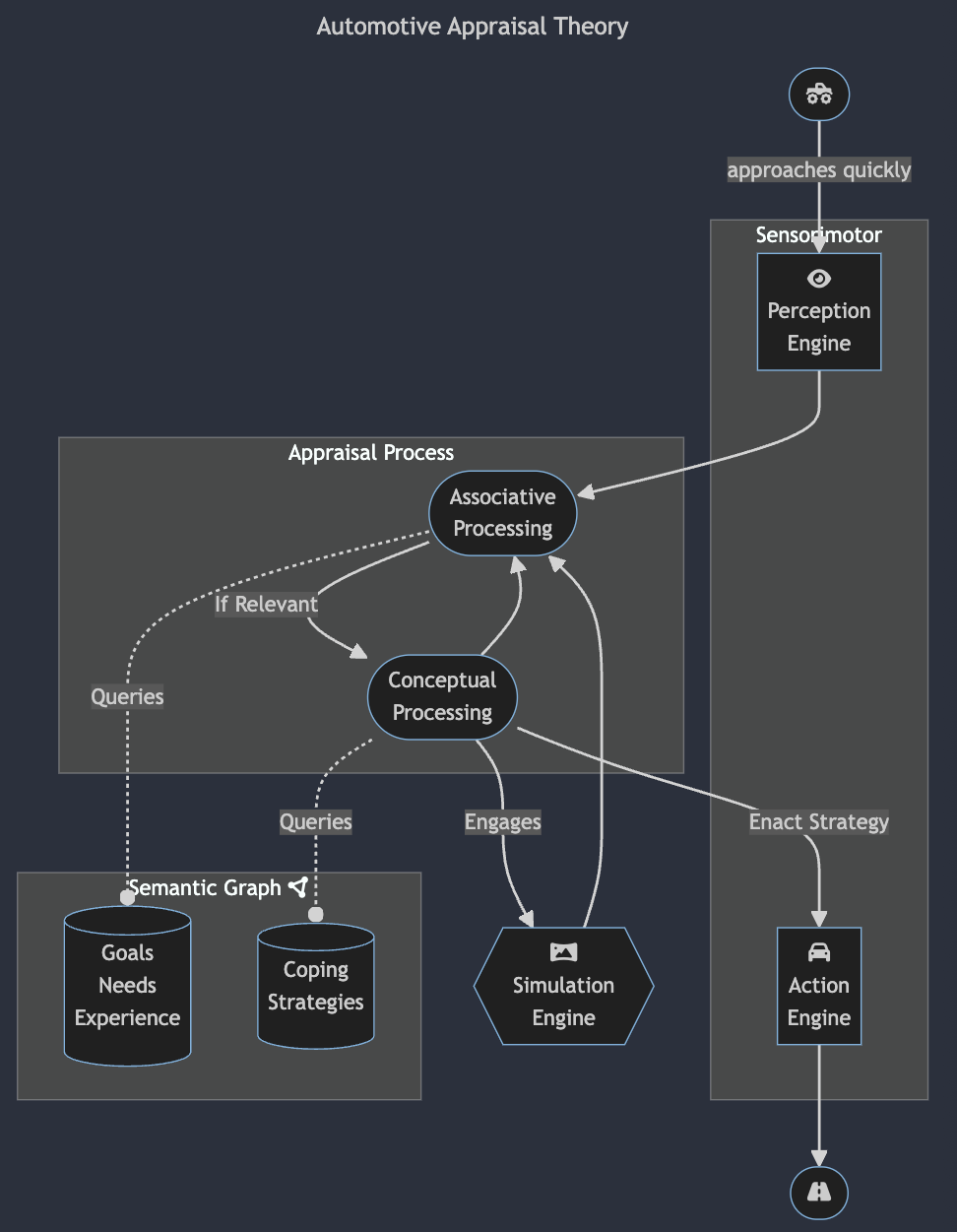

In the following diagram, I adapted the original model of the cognitive-motivational-emotive system from Smith and Lazarus (1990) into something like what would apply to an autonomous system like a self-driving car.

At the top of the digram, there is a stimulus represented by a (monster) truck icon that "approaches quickly", which gets picked up by the perception engine (Tesla Vision in this case, not all wheels must be reinvented in a Neural-Symbolic system for a self-driving car). The perception engine processes this stimulus into all the vectors and categories needed to fill out a context schema and raises it to the Appraisal Process where it's bounced back and forth between Associative (or "Schematic") and Conceptual processing. The associative processing network judges the context based on matches to current goals, active needs and past experience (memories). If relevant, the stimulus is passed on to the conceptual processing system where past strategies or newly assigned strategies are sent to the Simulation Engine. (Technically, the Simulation Engine would be a collaboration between the Perception and Action engines, but Mermaid wouldn't render it right so I just kept it on its own.) The simulation engine passes output back through the Appraisal Process in order for the action to be judged against the current situation. Whichever action is judged best, above a certain threshold depending on Affect Capability and the current enactment strategy organized by Cynefin domains (see below), the actions laid out in the strategy are carried out through the Action Engine. The results of those actions are then sensed by the Perception Engine, passed into the Appraisal Process and the whole thing cycles until a steady-state is achieved where NIKOLA is content once more. Now, this is just a high-level concept and as such is obviously simplistic, but I hope it illustrates how the theory can be applied to action selection in an embodied artificial system.

Drawing on Cynefin as a Heuristic to Narrow Strategies

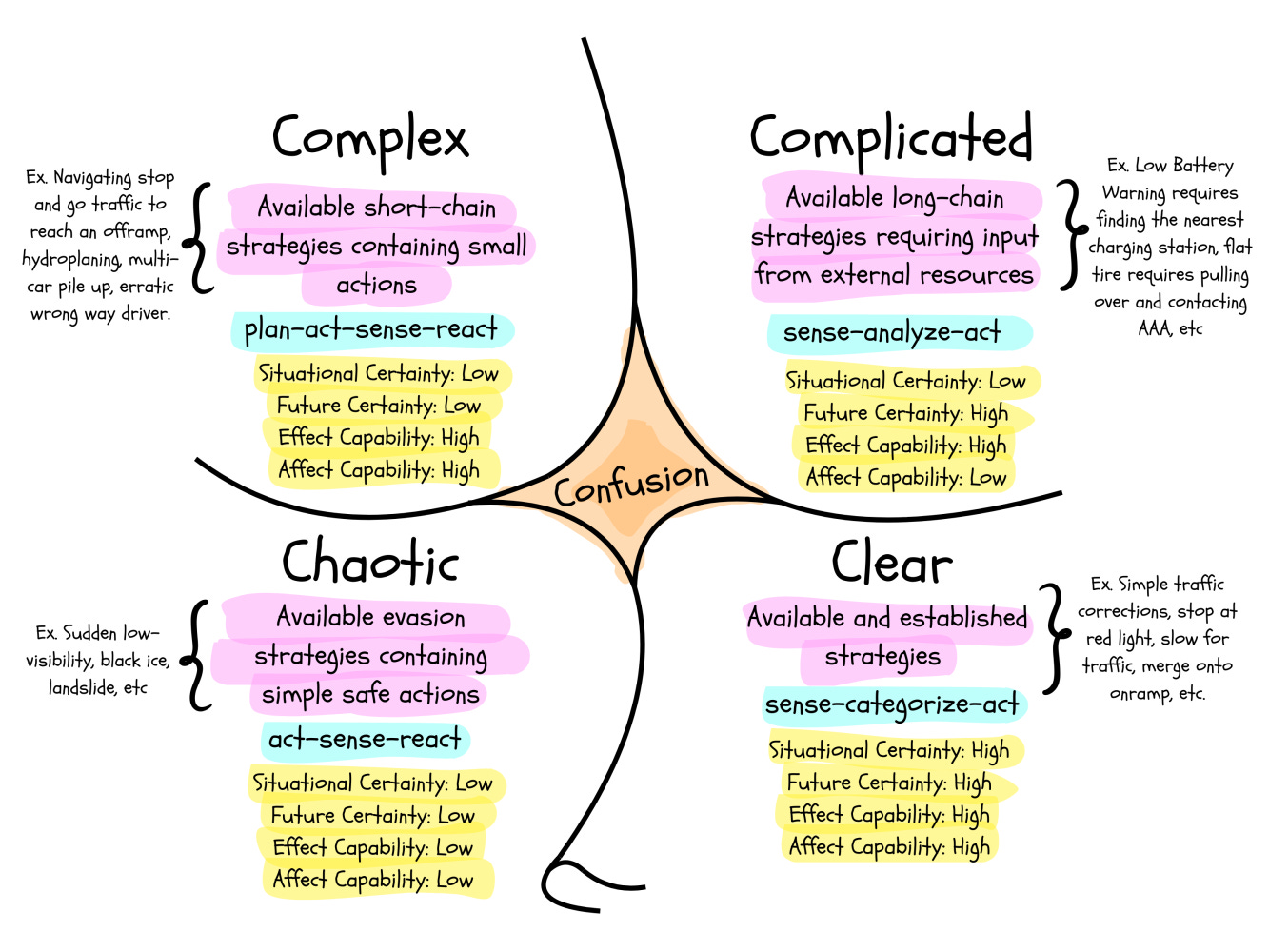

Using the appraisal dimensions for certainty and capability, or what Nikola knows about the present, the future and his ability to cope with the immediate problem or to adapt to it (react now or wait and see), I created a Cynefin-esque diagram to categorize strategy selection and application. What you see below is a way for Nikola to translate his current appraisal of the situation into a selection of actions and how to apply them. At the beginning of the story, Nikola was operating in the clear, reacting to mundane changes in the situation with known actions that maintain steady congruence with his current goal. Nikola was operating in the clear when he did not react to Bubba's fast approach, exhibiting high Affect Capability, or the ability to adapt or not act even though the approach represented a threat to safety (if not for symmetry, I'd call this dimension Adaptability, six-and-one-half-dozen). His prior experience informed this decision. When that failed, he applied another clear strategy of changing lanes to allow Bubba to pass. But he had no actual knowledge of Bubba's intentions, and when Bubba laid on the smoke, Nikola's situation fell right off that little squiggle-cliff at the bottom of the diagram into the chaotic domain. Suddenly, Nikola's available strategies narrowed down to a few simple actions to get out of the chaotic domain. When he came clear of the smoke, he encountered a new obstacle, and entered the confusion domain as he evaluated whether this new situation was complex or complicated, but that's for the next chapter in his story.

A note on the above diagram: I tried to adapt some of the domain strategies to this self-driving model and probably butchered them. I struggled with changing "probe" to "plan-act" in the above, but in a simple system like NIKOLA, most decisions to act require an action selection with simulation and then an action in order to sense the results and run back through the process. I tried to reflect that here and probably broke it. Don't tell Dave.

Story Analysis

I began this article in story form because, in a lot of ways, I view story as a cognitive pathway leading from novel problems to solutions (or failures), and specifically, a way to communicate how a problem might be solved (I stole this idea from Sean Coyne's Story Grid Trinity lectures - Slides only). Taking the above model and strategy categorization now, I'm going to break down Nikola's story into it's core components and apply the model to each one.

Now, some may balk at anthropomorphizing a machine like Nikola, but that's sort of the point of this whole exercise isn't it? In order to build machines that think like people, we need to think about them as if they were the protagonist in a story, or like someone we're watching in the Walmart parking lot and wondering what they're thinking trying to fit a whole kayak into a two seater Miata. We need to build a theory of mind around these hypothetical intelligences so that we can understand how they might reason or should reason, lest we end up with an alien intelligence that doesn't align with our own needs and desires and doesn't see much use in keeping us around.

In this specific case, however, maybe we don't think of Nikola in exactly human terms, but rather as a trusted beast of burden, a horse if you will. Let's call it hippomorphizing instead.

Anyway, first I'm going to break this story down into it's core components as a way to demonstrate a process of affective cognition based on Appraisal Theory with a little bit of the Cynefin decision-making framework sprinkled in to narrow down actions and build an enactment strategy. So, bear with me here as I warp these well researched theories to fit my cockamamie cognitive architecture.

Inciting Incident

In the story, Bubba represents a sudden change in situation that upsets Nikola's content status quo affect. When Bubba quickly approaches Nikola from behind, Nikola's perception engine raises the event into the appraisal process. The first check in this appraisal process is whether the event is relevant to Nikola and his needs. This requires checking the risk of the action against his core needs (mechanical, safety and esteem) and asking whether it is relevant to Nikola and his goals. Any activity that brings another vehicle within collision distance is determined to be relevant. This is an associative process, so it is fast and based on prior experience but not necessarily accurate. (Better to err on the side of caution.)

In this case, Nikola saw Bubba's approach as a threat, but his prior experience altered the probability of the threat to a minimum as similar encounters usually leads the other agent to change lanes rather than cause a collision. So he doesn't change his current behavior. He has adapted to the event, opting to wait and see rather than reacting impulsively, leveraging a high Affect Capability in light of the threat. However, his affect was changed to increased anxiety and a new "situational episode" begins that is processed in aggregate until the threat has passed.

Complications

Unfortunately, Bubba has no intent of going around Nikola. By moving closer to Nikola, the risk of collision increases in the situational episode. This puts Nikola into a state of annoyance: Bubba failed to act as predicted from prior experience, and so Nikola must take action in conflict with his core need of Esteem (albeit ever so slightly). In a mammalian and specifically primate system, a physical altercation or brake-check might ensue, but Nikola doesn't have those kinds of evaluations or strategies in his repertoire. He must abide by defensive driving principles and so Nikola, merges to the right so that Bubba may pass.

Turning Point

What Nikola doesn't realize it's that Bubba's tailgating is a tactic to goad him into that very action, putting Nikola in line with the truck's exhaust. When Nikola takes the bait, Bubba is free to create an unsafe driving condition for the sake of his ego. So he uses illegal engine modifications to fill the area with noxious diesel exhaust. Now Nikola is blind. Suddenly losing visibility is a novel situation that puts Nikola into both situational and future uncertainty with no established action strategies (effect capabilities), or inaction strategies (wait and see won't work here.) According to Cynefin (or at least the version I mangled for this article), Nikola is in the Chaotic domain.

Crisis

Being in the Chaotic domain, Nikola has no choice but to get out of it and re-establish visibility and situational certainty. But he has only two choices in his strategy bucket for this situation, he can either change lanes or slow down to get out of the smoke. Each action has repercussions and in the case of a self driving car, simulations of possible actions are necessary to judge each action. The simulation engine in this fantasy architecture is tightly coupled with the perception and action engines as these provide input into what are effectively imagined scenarios and possible risks. The output of the simulation is then judged by the appraisal process in much the same way other perceptions would be, but they are linked to the previous events in the situational episode and seen as predictions not sensations. The Future Certainty and Congruence dimensions of each scenario quickly vote up "slow down" as the least bad choice.

Climax and Resolution

Nikola slows down and comes clear of the smoke, but now traffic is stopped ahead of him. He can re-appraise the situation and find a new strategy to get him back on track to meet his esteem goal of getting Keith to the product meeting.

Conclusion

I wanted to write this about a cognitive architecture that used a vector of emotional valences to make these decisions based on a hierarchy of needs, in emulation of Uplift.bio, but I couldn't make it work. I got stuck trying to figure out how the emotional values were determined in any situation and how they were directly anchored to needs. It seemed great when I thought about it but then writing it down everything fell apart. So then I had to do research! What I landed on was the Appraisal Theory work by Smith, Ellsworth, Lazarus and all the rest still working out that theory. It isn't comprehensive by any means, people have hundreds of names for emotions and some cultures have their own and so we steal their words, but it's a start and it gets the basic ones. Grounding actions to affect through situational appraisal based on a set of needs provides a strong foundation for a neural-symbolic system to build strategies that can handle novel situations and reinforce other learning.

References

What follows is a list of research I may or may not have directly or indirectly mentioned in this article. It's really hard to keep track.

Justin you're so smart. Most of this went over my head, but I saved it to read in the future for a more thoughtful read. We could all learn so much from you.

Favorite line from this paper so far is: “Lane change simulations tell him that the risk of a collision is high… while slowing down only runs the risk of a low relative impact.” It’s a model of the least-bad forced selection under incomplete information. Very much the lesser of two weevils (Master and Commander joke).

Your model works by narrowing things down until the system finds a move that restores stability.

What happens when there isn’t one?

When every available choice permanently breaks something the system needs?